Monday, 1 June 2026

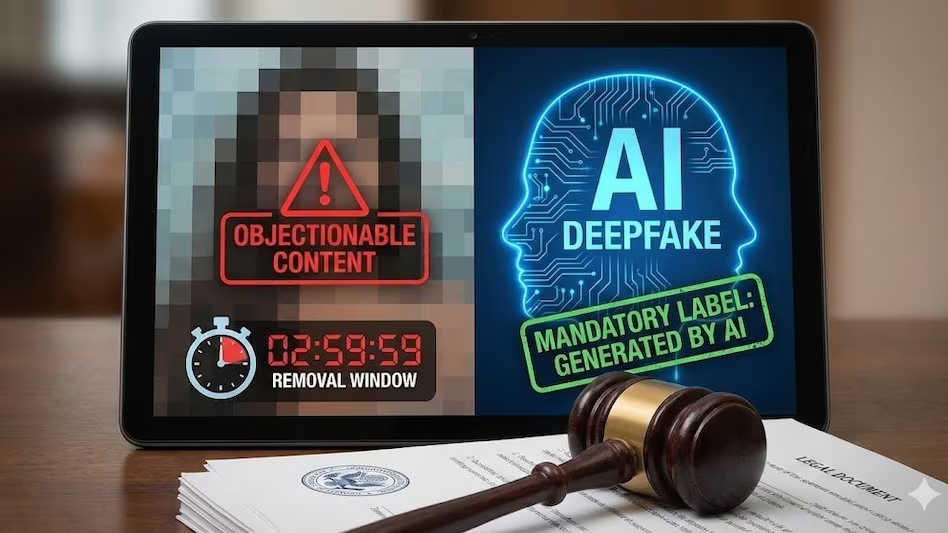

The Ministry of Electronics and Information Technology (MeitY) has officially notified amendments to the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, with a strong focus on regulating artificial intelligence (AI) generated content and deepfakes. The new rules will come into effect from February 20, following a 10-day compliance window for social media platforms and intermediaries. Notably, the government has now provided a clear definition of “deepfakes,” describing them as artificially created or modified audio, visual, or audio-visual content that appears real and indistinguishable from genuine individuals or events.

One of the most significant changes is the reduction in takedown time for deepfakes and inappropriate content. Previously set at 36 hours, platforms must now remove such content within three hours of it being reported or detected. However, the government has relaxed earlier guidelines regarding AI content labelling. Instead of mandating labels to cover at least 10 percent of the content space, the revised rules state that AI-generated content must be labelled “prominently,” offering platforms greater flexibility.

The amendments also introduce stricter accountability measures for users and platforms. Social media intermediaries must inform users every three months about potential penalties for violations, including post removal, account suspension, termination, and possible legal action. Platforms are required to help identify offenders and disclose their identity to complainants when necessary. Additionally, users must declare if their posts contain synthetically generated information, and platforms must implement tools to verify such declarations and ensure visible AI labelling.

Comments (0)

No comments yet

Be the first to comment!